In an era where algorithms whisper secrets into the silicon veins of our digital world, a quiet revolution hums beneath the surface—one that doesn’t just mimic human creativity but reshapes the very fabric of thought, trust, and truth. Generative AI, with its uncanny ability to conjure prose, poetry, and even policy from the ether of data, has ignited a global fascination. Yet, beneath the dazzle of its outputs lies a labyrinth of ethical quandaries that demand more than just awe—they demand vigilance, wisdom, and a willingness to confront the shadows of innovation. This is not merely a course in generative AI ethics; it’s a journey into the heart of what it means to create, consume, and coexist with machines that learn to think like us.

Why does this topic captivate us so deeply? Perhaps it’s the paradox of control—here we are, architects of silicon sentience, yet haunted by the specter of losing the reins. Or maybe it’s the thrill of standing at the precipice of a new Renaissance, where art, science, and ethics collide in a symphony of sparks and smoke. Whatever the reason, one thing is clear: the ethics of generative AI are not a footnote in the story of progress; they are the spine holding the narrative upright.

The Alchemy of Creation: Where Code Meets Conscience

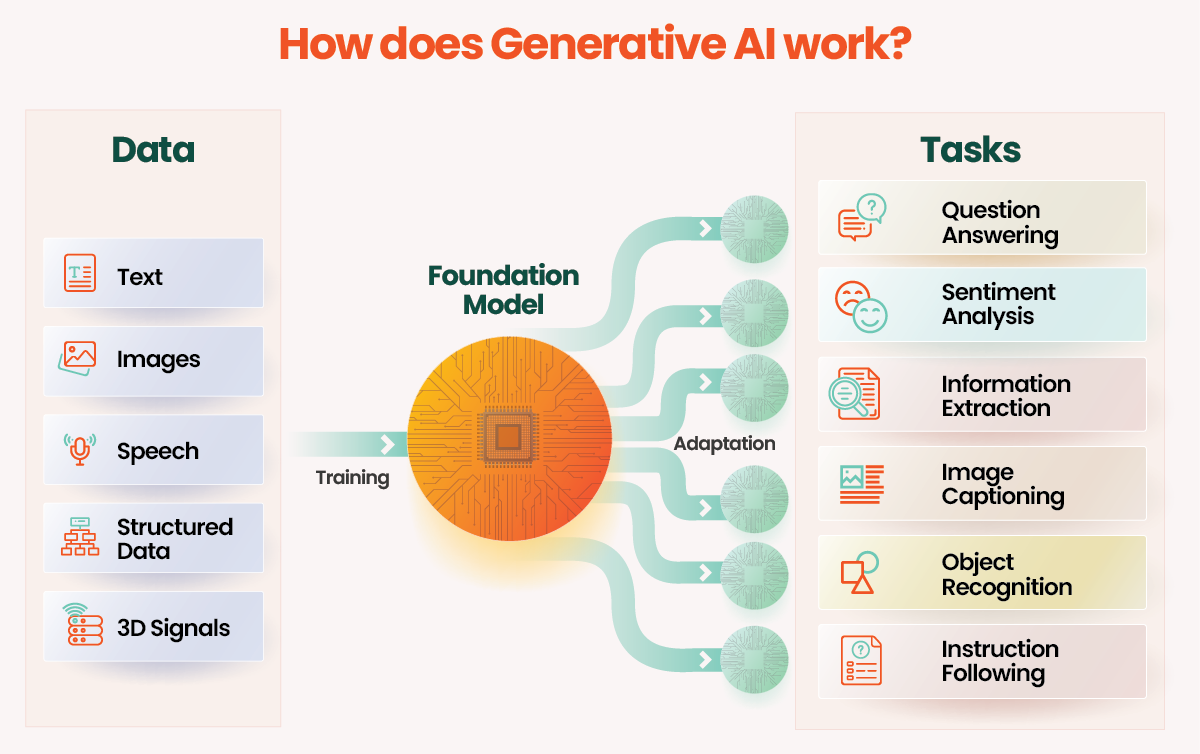

Generative AI doesn’t just regurgitate information—it transmutes it. Like a modern-day alchemist, it takes the base metals of raw data and forges them into gold: coherent sentences, evocative images, even symphonies. But alchemy has a dark side. The ethical stakes are not just about what is created, but how, why, and for whom. Consider the training data—a vast, unfiltered ocean of human expression. Embedded within it are biases, prejudices, and unspoken assumptions that the AI, in its relentless pattern-matching, amplifies. A model trained on predominantly Western literature may struggle to capture the nuances of Eastern proverbs; one fed on historical texts rife with gender stereotypes might perpetuate them in its outputs. The result? A mirror held up to humanity’s flaws, polished to a disconcerting sheen.

The alchemy of creation extends beyond bias. It touches on ownership—the question of who, or what, deserves credit for an AI-generated masterpiece. Is it the developer who built the model? The user who refined the prompt? The dataset curators who unknowingly seeded the machine’s mind? The legal frameworks lag far behind the technology, leaving creators, corporations, and consumers in a legal limbo where the only certainty is uncertainty. And then there’s the specter of deepfakes, where the line between art and deception blurs into oblivion. A voice cloned from a few seconds of audio can dupe loved ones; an image forged from pixels can topple governments. The power to create is also the power to deceive, and the ethical compass must be calibrated to navigate these treacherous waters.

The Illusion of Neutrality: Data as the Silent Puppeteer

Generative AI is often touted as a neutral tool, a blank canvas upon which human intention is painted. But data is never neutral. It is a living archive of human history, fraught with omissions, distortions, and power imbalances. A dataset scraped from the internet without context is a minefield of misinformation, where the loudest voices drown out the silenced ones. The result? An AI that speaks in the tongue of the dominant culture, reinforcing the status quo while claiming objectivity.

This illusion of neutrality extends to the outputs themselves. When an AI generates a poem in the style of Emily Dickinson or a business plan in the tone of a Silicon Valley CEO, it’s not just replicating style—it’s perpetuating the ideologies embedded in those styles. The ethical imperative here is not just to audit the data but to interrogate the narratives it carries. Who gets to define what “good” writing looks like? Whose voices are amplified, and whose are erased? The answers are not found in the code but in the societal structures that shape the data in the first place.

Moreover, the very act of training generative models consumes vast amounts of energy, leaving a carbon footprint that rivals small nations. The environmental cost of this “neutral” tool is a stark reminder that ethics are not confined to the digital realm—they ripple outward, touching the physical world in ways we are only beginning to understand. The pursuit of AI innovation must be tempered with a commitment to sustainability, lest we trade one form of exploitation for another.

The Human Element: Accountability in an Age of Autonomy

When an AI generates a harmful output—a piece of hate speech, a medical misdiagnosis, or a financial scam—who is held accountable? The developers? The users? The platform? The answer is as murky as the outputs themselves. Generative AI operates in a gray zone where responsibility is diffused, diluted, and often denied. This diffusion erodes trust, not just in the technology but in the institutions that deploy it. If a student uses an AI to write an essay, is the student cheating, or is the educational system failing to adapt? If a company uses AI to automate customer service, does it absolve itself of the emotional labor it offloads onto machines?

The ethical framework for generative AI must therefore include mechanisms for accountability that are as dynamic as the technology itself. Transparency is key—users should know when they are interacting with an AI, and developers should be required to disclose the limitations of their models. But transparency alone is not enough. We need robust governance structures that can adapt to the rapid evolution of AI, balancing innovation with protection. This might mean rethinking liability laws, establishing ethical review boards, or even creating new roles—AI ethicists, digital ombudsmen, and algorithmic auditors—whose sole purpose is to ensure that the machines we create serve humanity, not the other way around.

Yet, accountability is not just a legal or corporate concern—it’s a deeply human one. The more we delegate to AI, the more we risk losing the skills and sensibilities that define our humanity. Critical thinking, empathy, and moral reasoning are not just nice-to-haves; they are the bedrock of a functional society. If we outsource these qualities to machines, we risk becoming passive consumers of convenience, rather than active stewards of our collective future. The ethical imperative is clear: generative AI should augment human potential, not replace it.

The Future We Deserve: Building an Ethical AI Ecosystem

The path forward is not a straight line but a winding trail through uncharted territory. It demands collaboration across disciplines—technologists, philosophers, policymakers, and artists must come together to define what ethical AI looks like. It requires education—not just for developers, but for the public—to demystify the technology and empower people to use it responsibly. And it calls for humility, an acknowledgment that we cannot predict all the consequences of our creations, and that the most ethical AI is the one that is constantly questioned, refined, and, when necessary, dismantled.

Imagine a world where generative AI is not just a tool but a partner in ethical exploration. Where models are trained on datasets curated with the same care as a museum’s collection, where outputs are vetted not just for accuracy but for impact, and where the carbon footprint of innovation is as closely monitored as its financial returns. This is not a utopian fantasy—it is a blueprint for the future we must strive to build. The technology is here. The question is not whether we can create it, but whether we deserve to.

The fascination with generative AI is not just about its potential to dazzle us with its creations. It’s about the mirror it holds up to our own humanity—the good, the bad, and the uncomfortably ambiguous. The ethics of generative AI are not a constraint on innovation; they are the compass that will guide us through the storm. To ignore them is to court disaster. To embrace them is to shape a future where technology and humanity coexist in harmony, each enhancing the other’s potential. The course is set. The time to act is now.

The journey through the ethical landscape of generative AI is not for the faint of heart. It is a path fraught with complexity, contradiction, and the occasional existential dread. Yet, it is also a path illuminated by the promise of a better world—one where machines do not just think, but think with us, for us, and alongside us. The question is not whether we can afford to tread this path, but whether we can afford not to. The age of generative AI has arrived. The age of ethical reckoning is long overdue.

Leave a comment