In an era where digital interactions blur the boundaries between text, voice, and visual cues, the future of sentiment analysis is not just evolving—it’s undergoing a radical metamorphosis. Multimodal Sentiment AI, the cutting-edge fusion of textual, auditory, and facial expression analysis, is poised to redefine how we interpret human emotions in the digital realm. Gone are the days when sentiment analysis relied solely on the cold, static words of a message. Today, AI systems are learning to “listen” to the inflections in a voice, to “observe” the micro-expressions that flicker across a face, and to “synthesize” these disparate data streams into a cohesive emotional narrative. This isn’t just about understanding what someone says—it’s about grasping how they say it, why they say it, and what they might be feeling beneath the surface.

The implications are staggering. Imagine a customer service chatbot that doesn’t just respond to your words but also detects the frustration in your voice or the subtle tension in your facial muscles, adjusting its tone in real-time to de-escalate a situation. Picture a social media platform that flags not just toxic text but also the subliminal aggression in a meme’s imagery or the passive-aggressive undertones in a sarcastic voice note. Multimodal Sentiment AI is the bridge between the explicit and the implicit, the spoken and the unspoken, the written and the visually conveyed. It’s not just a tool for businesses—it’s a revolution in human-computer interaction, one that promises to make digital communication richer, more empathetic, and profoundly more human.

But what does this future look like in practice? How will it transform industries, reshape user experiences, and challenge our ethical boundaries? Let’s embark on a journey through the landscapes of text, voice, and face—where AI doesn’t just analyze sentiment but decodes the very essence of human emotion.

The Alchemy of Text: Beyond Words to Emotional Nuance

Text has long been the bedrock of sentiment analysis, but the future lies in transcending its limitations. Traditional text-based sentiment analysis relies on lexicons and machine learning models that parse words for positive, negative, or neutral connotations. However, this approach often misses the subtleties of sarcasm, irony, or cultural context. The next frontier? Contextual sentiment alchemy, where AI doesn’t just read words but interprets the emotional DNA woven into them.

Consider the phrase, “Oh, great, another meeting.” A basic sentiment analyzer might flag it as positive due to the word “great.” But a multimodal system, trained on voice inflections and facial reactions, would recognize the dripping sarcasm in the tone and the eye-roll that accompanies it. This requires a fusion of semantic understanding and pragmatic analysis, where AI deciphers not just what is said but what is meant. It’s about detecting the emotional subtext in a Slack message, the passive-aggressive undertones in a passive voice, or the unspoken frustration in a tersely written email.

Moreover, the future of text sentiment analysis will incorporate dynamic emotional modeling. Instead of static sentiment scores, AI will generate emotional timelines that map how a user’s sentiment evolves over a conversation. Did their frustration escalate? Did their enthusiasm wane? This granularity allows for more nuanced responses, whether in customer support, mental health chatbots, or even political discourse analysis. The goal isn’t just to know if someone is happy or angry—it’s to understand the why behind their emotional state.

The Symphony of Voice: Decoding the Unspoken in Decibels

Voice is the most primal form of human communication, carrying layers of meaning that text can never capture. The future of Multimodal Sentiment AI will harness the acoustic fingerprint of speech—the pitch, tempo, volume, and even the micro-pauses that betray emotion. This isn’t just about detecting anger or joy in a voice note; it’s about understanding the emotional topography of a speaker’s vocal landscape.

For instance, a prosodic analysis system could distinguish between genuine laughter and polite chuckling in a customer service call, or between a stressed employee’s rapid-fire speech and their usual cadence. AI models trained on vast datasets of emotional speech can now identify vocal biomarkers associated with specific emotions, such as the trembling pitch that signals anxiety or the clipped enunciation that hints at frustration. This has transformative applications in fields like healthcare, where AI could detect early signs of depression in a patient’s voice, or in call centers, where it could flag escalating customer distress before it boils over.

But voice sentiment analysis isn’t just about individual interactions—it’s about the collective emotional resonance of a group. Imagine an AI system analyzing the sentiment of a live podcast or a virtual town hall, not just from the words spoken but from the collective sighs, laughter, and murmurs of the audience. This could revolutionize real-time audience engagement, allowing creators to adjust their tone or content based on the emotional pulse of their listeners. The future of voice sentiment AI isn’t just about understanding individuals—it’s about capturing the emotional zeitgeist of entire communities.

The Canvas of the Face: Where Micro-Expressions Speak Volumes

Facial expressions are the most immediate and involuntary indicators of emotion, yet they are also the most complex to interpret. The future of Multimodal Sentiment AI will master the art of facial micro-expression decoding, where AI doesn’t just recognize a smile or a frown but detects the fleeting, subconscious twitches that reveal true feelings. This goes beyond the basic six universal emotions (happiness, sadness, anger, fear, surprise, disgust, and contempt) to capture the emotional granularity of human interaction.

Consider the Duchenne smile—a genuine expression of joy that involves not just the mouth but the eyes, where the crow’s feet crinkle in a telltale sign of authenticity. A multimodal AI system could distinguish this from a polite, forced smile, which might lack the eye involvement. Similarly, it could detect the micro-expression of contempt—a brief, unilateral lip curl that flashes across the face in a fraction of a second, often betraying hidden disdain. These fleeting signals are the raw material of emotional truth, and AI is learning to read them with increasing accuracy.

In practical terms, facial sentiment analysis will transform industries like market research, where brands can gauge genuine consumer reactions to advertisements in real-time, or in law enforcement, where AI could help detect deception during interrogations. But the ethical implications are profound. The ability to read micro-expressions from a distance, without consent, raises critical questions about privacy and surveillance. As AI systems become more adept at facial sentiment analysis, society will need to grapple with the balance between technological advancement and individual rights.

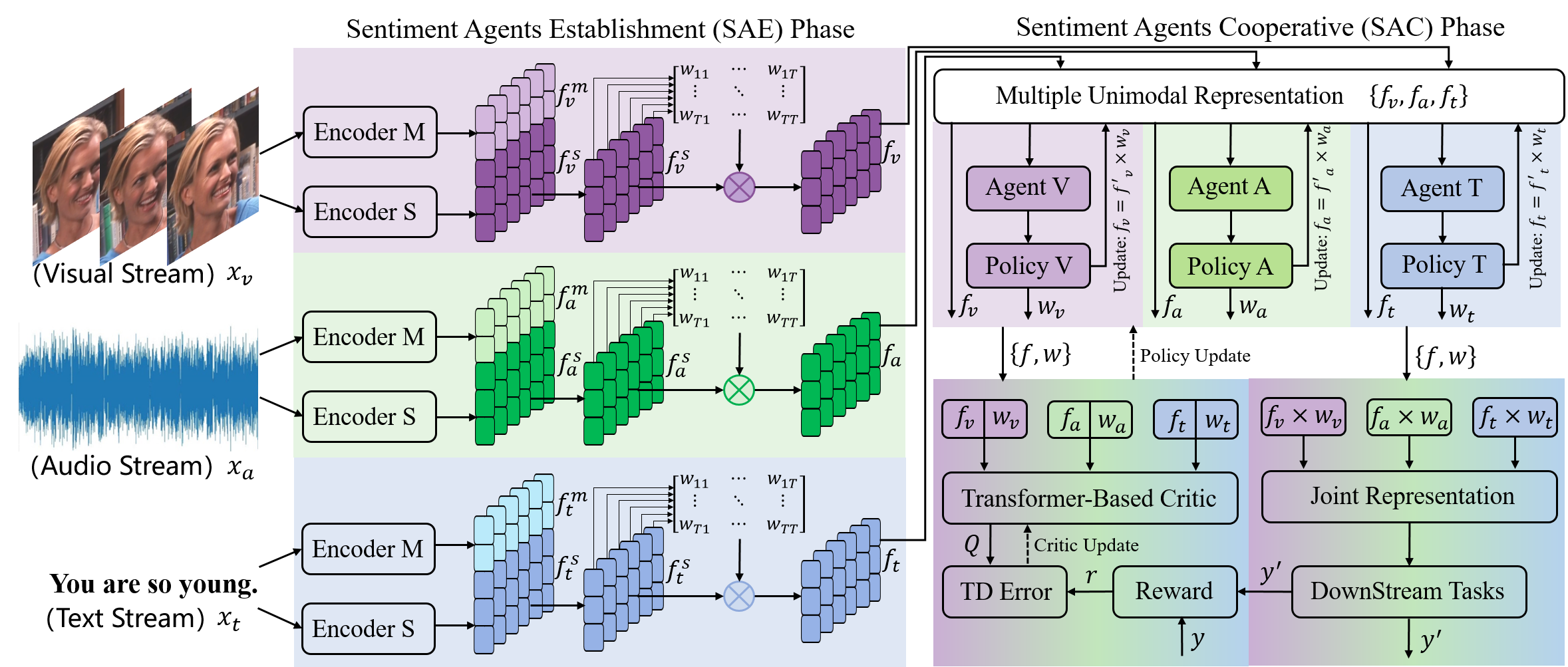

The Holy Grail: Fusing Text, Voice, and Face into a Unified Emotional Intelligence

The true magic of Multimodal Sentiment AI lies in its ability to synthesize disparate data streams into a cohesive emotional narrative. This isn’t just about running three separate analyses and averaging the results—it’s about creating a holistic emotional model where text, voice, and facial data interact dynamically. For example, if a user’s text is neutral but their voice is trembling with anxiety, the AI might infer underlying stress. If their facial expression shows a flicker of disgust while they type a positive review, the system could flag potential insincerity.

This fusion requires advanced cross-modal attention mechanisms, where AI models learn to weigh the importance of each data stream in real-time. It also demands contextual memory, where the system remembers past interactions to provide a more nuanced understanding of a user’s emotional state. For instance, if a customer has a history of frustration with a product, their current neutral text might carry more weight than it would for a first-time user.

The applications of this unified emotional intelligence are vast. In mental health, AI could detect early signs of depression by analyzing a patient’s speech patterns, facial expressions, and text messages over time. In education, it could help teachers identify students who are struggling emotionally, even if they’re not vocal about it. In entertainment, it could revolutionize gaming, where NPCs (non-player characters) respond dynamically to the player’s emotional state, creating truly immersive experiences.

But the real game-changer will be in emotional personalization. Imagine a digital assistant that doesn’t just know your preferences but understands your emotional triggers. It could adjust its responses based on whether you’re having a good day or a bad one, or it could suggest activities that align with your current mood. This isn’t just about convenience—it’s about creating technology that feels truly attuned to the human experience.

The Ethical Labyrinth: Navigating the Dark Side of Emotional AI

With great power comes great responsibility, and Multimodal Sentiment AI is no exception. The ability to decode emotions with such precision raises ethical dilemmas that society is only beginning to confront. Consent is the most pressing issue: Do users know when their emotions are being analyzed? Can they opt out? The use of facial recognition in public spaces, for example, could lead to a world where every smile, frown, or grimace is logged and monetized without explicit permission.

There’s also the risk of emotional manipulation. If AI can so accurately predict and influence emotions, it could be weaponized in advertising, politics, or even interpersonal relationships. Imagine a dating app that subtly nudges users toward certain emotional states to increase engagement, or a political campaign that tailors its messaging to exploit the fears and hopes of undecided voters. The line between personalization and manipulation is thin, and Multimodal Sentiment AI could blur it further.

Privacy is another critical concern. Emotional data is deeply personal, and its misuse could have devastating consequences. A hacker gaining access to a database of emotional profiles could blackmail individuals based on their psychological vulnerabilities. Employers might use emotional AI to screen candidates not just on their skills but on their predicted emotional resilience. The potential for discrimination and exploitation is immense, and without robust regulations, the future of Multimodal Sentiment AI could be dystopian rather than utopian.

To mitigate these risks, a multi-stakeholder approach is essential. Governments, tech companies, and ethicists must collaborate to establish emotional data governance frameworks that prioritize transparency, consent, and accountability. Users should have the right to know how their emotional data is being used and the ability to control its dissemination. AI systems should be audited for bias, and their emotional predictions should be explainable, not a black box of opaque algorithms.

The Road Ahead: A Future Where AI Feels (and We Feel Back)

The journey toward fully realized Multimodal Sentiment AI is still in its infancy, but the destination is nothing short of revolutionary. We are on the cusp of a world where technology doesn’t just understand our words but empathizes with our emotions, where digital interactions feel as rich and nuanced as face-to-face conversations. This future holds the promise of more empathetic customer service, more effective mental health tools, and more authentic human-computer relationships.

Yet, as we stride toward this future, we must tread carefully. The power to decode emotions is a double-edged sword, capable of healing or harming, connecting or controlling. The challenge ahead isn’t just technical—it’s philosophical. It’s about defining what it means to be human in an age where machines can not only mimic our emotions but potentially understand them better than we do ourselves.

Multimodal Sentiment AI is more than a technological marvel; it’s a mirror reflecting our deepest selves back at us. The question is: Will we use it to build a more compassionate world, or will we let it erode the very essence of human connection? The choice is ours—and the future is listening.

Leave a comment